Handling large files in Plone with ore.bigfile

Plone The Application is great. Out of the box it has a set of sensible defaults and features that are useful for a wide range of use cases. You can install Plone and without having to make any adjustments start using it immediately.

The stack that Plone is built on is very flexible, but if you push the defaults hard enough you will begin to discover the limits. This is the scaling issue: how does the application perform under high load. For Plone, as well as many other web applications, a sure way to increase the load on the system is to have it process large files. What is a large file? The answer is ‘it depends’. For purposes of this post we will assume it is any file large enough to make Plone feel slow for the end user, or worse result in a time out.

To be clear, handling large files is not a problem exclusive to Zope/Plone, it is an issue with many application servers. This description of the issue come straight from the Rails community:

“When a browser uploads a file, it encodes the contents in a format

called ‘multipart mime’ (it’s the same format that gets used when

you send an email attachment). In order for your application to do

something with that file, rails has to undo this encoding. To do

this requires reading the huge request body, and matching each line

against a few regular expressions. This can be incredibly slow and

use a huge amount of CPU and memory.

While this parsing is happening your rails process is busy, and can’t

handle other requests. Pity the poor user stuck behind a request which

contains a 100M upload!”

http://therailsway.com/tags/porter

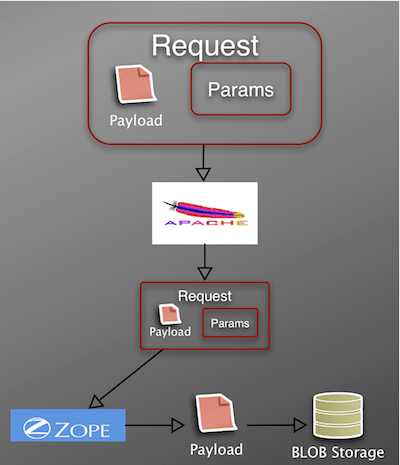

Plone has the same issue of thread blocking. Let’s take the example of a 15MB file uploaded to Plone, with the file contents ultimately stored as a BLOB in the ZODB.

- Browser encodes file data in multipart mime format (payload) and sends this in the request body along with additional parameters such as the name of the file, etc.

- Apache receives the file and forwards the request to Zope.

- Zope must undo the coding, which is both CPU and memory intensive. During this processing the Zope thread is blocked, thus unable to service additional requests. The risk is that, in a multiple user scenario, you can potentially tie up all available threads.

- Once Zope has parsed the request, it can then write the file data – in this case it writes the data to ZODB BLOB storage.

For many sites, having Zope process the file in the above manner is an adequate solution. The size of the uploads is not usually large, and the site traffic is not heavy. Recently however, we had a client who needed a high availability site that could handle large file uploads and downloads. We had to find a way to increase Plone’s stock file handling performance. In order to do this we partnered with Kapil Thangavelu to develop both an implementation strategy and supporting code.

The general strategy can be summed up as:

- Offload file encoding/unencoding and read/write operations from Plone.

- Web servers are really good at handling the above tasks.

- Unlike application servers, for web servers like Apache file streaming is fast and threads are cheap.

Learning from Rails

The first step is to move the file processing from Plone to Apache (or your web server of choice). A solution that comes from the Rails community is to use the ModPorter Apache module.

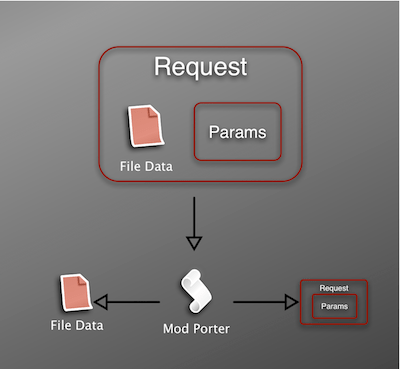

In a nutshell, ModPorter does the following to an incoming request.

- Parses the multipart mime data

- Writes the file to disk

- Modifies the request to contain a pointer to the temp file on disk

Clearly ModPorter is powerful magic, but we still have to integrate it with Plone. This is where Kapil’s ore.bigfile package comes into play.

ore.bigfile provides the following:

- A modified file upload widget that supports two upload methods

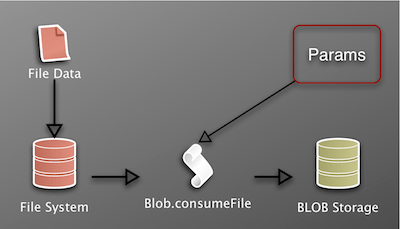

- Code to securely move the temp file created by the web server/ModPorter to BLOB Storage and link the data to a Plone File object (Blob.consumeFile).

- Code to provide an efficient method of downloading large files.

- An alternate (not CMFEditions) way of providing file versioning

With ore.bigfile in place, once ModPorter has finished writing the file data to disk, Blob.consumeFile is called with the necessary parameters to store the data in ZODB BLOB storage. The key is that Plone only has to process a lightweight request body to create the Plone File – the heavy lifting has already been done by Apache.

I mentioned that the file upload widget supports two upload methods. The first method supports uploading via the browser, as described above. The second method supports processing a file that was uploaded using an FTP client. Browsers in general are unreliable for long upload operations. However most FTP clients support continuation in the event that the transfer is interrupted. So for very large uploads (again it depends on the speed and quality of your network), the file is first uploaded via FTP to an incoming directory. When a new File object is created in Plone, the user has a choice to upload a new file using the browser, or select from list of files that have already been uploaded to the incoming directory. Data files are swept from the incoming directory after they are used in the creation of a Plone File.

What goes up, must come down

Solving the upload problem only gets you half way there.

Downloading large files will also tie up a Zope thread until the file is passed entirely to the front-end webserver. The solution is to use the second feature of ore.bigfile, which offloads the serving of the file from Zope to the front-end web server. The initial request is still handled by Plone to ensure the necessary security checks are in place, but instead of having Zope read the data from BLOB storage, ore.bigfile constructs a custom response to send to the webserver. When using Apache, the response consists of an X-Sendfile header and a path to the file data in BLOB storage. This results in Apache reading the file directly from disk, instead of having to read the data from Zope. This ensures that the Zope thread is quickly released so that it can respond to new requests.

Where to go from here

To recap, the default installation of Plone is incredibly useful. It works because it makes as few assumptions as possible about the installation’s environment. However for most production sites you need to begin integrating other tools – such as a caching proxy – to maintain system performance. In the case of caching, Plone uses a combination of internal software (Cache-Fu for example) and supporting software (Varnsih, Squid, etc.) As with caching, efficient large file handling could be implemented in Plone by extending the code and concepts from ore.bigfile to provide utilities that could be configured to work with common front-end webservers.

Nice post, and great idea for dealing with big files. Very similar to what Tramline does, but now with blobstorage solves a lot of problems Tramline had (eg unable to report file size correctly, or do processing from within Plone on the file). I look forward to giving it a try myself.

How does it deal with security of the FTP files? Eg if Alice uploads a file via FTP, can Bob then add it to Plone before she gets to it?

-Matt

“How does it deal with security of the FTP files? Eg if Alice uploads a file via FTP, can Bob then add it to Plone before she gets to it?”

The sftp upload implementation is basic and geared towards having trusted super users, so Alice had better trust Bob. It does not, as they say, scale. The sftp ability was implemented so that files in the hundred MB+ category could be accommodated and not be limited by browser/network time-outs. With fast and reliable connections (relative to file size) the browser should be enough.

Very nicely covered. I’ll definitely have to take a look at ore.bigfile as it’s one of the bigger (no pun intended) issues I’ve been facing with our Plone instances.

Nice post Aaron, and very interesting subject.

A question: the same thread blocking issue happens also for read process? If more users access for reading big files inside Plone?

“A question: the same thread blocking issue happens also for read process?”

Yes. I describe it more briefly under ‘What goes up, must come down’. A light weight response is sent to Apache with the location of the file on disk (BLOB storage), Apache then streams the file data to client.

Oh, ok, now I get it.

This can be useful for simple media/video sites. The tramline road is more complicated!

Cool!

What about wsgi, instead of using ModPorter?

ore.bigfile could be adapted to work with a wsgi middleware, I am not aware of any that work the same as ModPorter.

Sounds very much like what Tramline did (invented within the Zope community years ago). Of course Tramline’s current implementation has some issues as it depends on mod_python which is not maintained anymore, so this sounds like a good replacement. ModPorter being a C module is good too, as it avoids passing all that data through Python (though I think Tramline did that reasonably well).

Note that hurry.file contains a file upload widget with tramline support and offers facilities to access files directly.

It’s not quite as bad as that for downloads. Blobs and other filesystem files (such as browser resources or Reflecto files) are served asynchronously without holding the Zope thread thanks to the filestream_iterator.

Sorry, I don’t get it. You mean that simply using Blob, for file download, you have no locked thread?

That’s my understanding, Luca.

John, you are right! I tested this manually!

A site without blob support is locking my zthread downloading a very bif file (and this is know).

After installing plone.app.blob and migrating the file, the thread is immediatly released.

Amazing!

The filestream_iterator writes the file to the HTTP response through a small size buffer but still locks the thread making this HTTP response.

The full file response is shared by xxx consecutive threads that provide file chunks if the client is capable of requesting partial content (see http://www.w3.org/Protocols/rfc2616/rfc2616-sec14.html at 14.16) with a Content-Range header. Flash movie players, Adobe PDF reader, VLC, Quicktime player do this. Unfortunately this is not the case of the browser itseld that makes a full query of the file.

Thanks for writing this up Aaron, I just noticed it.

Re Lawrence’s comment on download, bypassing the zope process entirely, is significantly more efficient than using a file stream iterator, using the sendfile implementation effectively removes both the front end server (apache) and zope from the mix, and basically has the kernel do the file transfer from vfs to socket with neglible userland overhead. With tools like filestream iterator your still effectively copying the file through the process memory in the zope process, and the apache process (although apache may spool till disk, again causing additional writes). Hence using sendfile is the most efficent possible way to get the bits out on the wire.

Re For FTP/SFTP usage, as aaron mention the original role was trusted super user, if security is a concern across roles, an additional extension/feature could implement per user upload directories.